If you open any Youtube video, which has in its description a link to an external URL, you may notice that the link points to a Youtube redirection mechanism (https://www.youtube.com/redirect?…), with the target URL being passed to it as a parameter, rather than to the target URL itself. In such a case, the link has the following structure:

https://www.youtube.com/redirect?q=[target_URL]&redir_token=[token]&event=video_description&v=[video_ID]

Since there is a redir_token parameter in the URL, one might assume that the redirect mechanism isn’t open, i.e. that can’t be used for redirection to an arbitrary URL. One would, however, be only half-right.

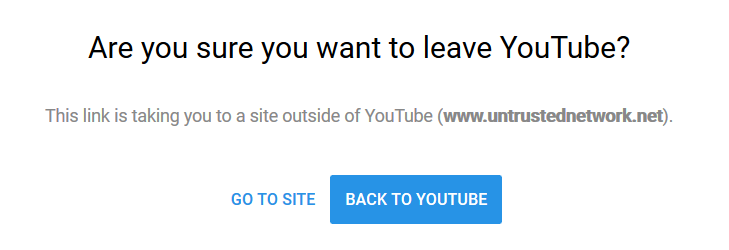

The value of the token seems to be connected with the current Youtube session (though there isn’t any obvious corelation between values of relevant cookies and the token). And while parameters event and v are optional, if you try to use the redirection mechanism without the redir_token parameter - or with an invalid value of this parameter - you will be greeted with the following message:

You may try this out for yourself yourself using this link. So far everything seems to be in order.

A problem - if only a small one - however, starts to become obvious when we try to use a valid token along with another URL (i.e. we copy a valid link, perhaps delete the optional parameters, and change the value of the parameter q). In this case, a browser will indeed be redirected (using HTTP code 303) to the new URL, because the tokens are in no way dependent on the value of q.

This means that if you can get a valid redirect link from a user, who has an active Youtube session established, you could modify it in such a way, that - if this user opened it - it would redirect his/her browser to the URL of your choice. As the tokens seem to last (although I tried to determine the maximum age for a token on only one ocasion so don’t quote me on it) for approximately 24 hours, one could hypotetically use this (it should probably be called “partially-missing input validation”, but “half-open redirect” will do) vulnerability in a real world scenario. Although it is almost completely useless for malicious phishing campaigns, it could be used quite effectively against - for example - one’s coleagues and/or friends (e.g. “Jack, could you please send me the link under this video? Thank you. Now, here is a link to a video you’re going to love…"). Plus, it might be a good example of dangers of clicking on seemingly safe links in e-mail for any security awareness classes out there.

Since Google replied to me that they don’t intend to fix this small vulnerability and don’t mind if I publish it, use it (ethically, please) as you see fit.

It should be added that there seems to be some regularity to the values of tokens being generated (e.g. when a site is refreshed), but at first glance there doesn’t seem to be any obvious way to use this regularity to craft valid tokens, although I didn’t spend much time on verifying that.